AI In Construction

3/4/2026

Unprecedented hype surrounds artificial intelligence. In the thousands of articles generated by AI’s recent advancements, it is simultaneously described as a productivity miracle and job destroyer, creative cultivator and aesthetic parasite, the savior of—and existential threat to—humanity.

It is, in fact, all of those things. But for many, the relentless noise has reduced AI into an irritating buzzword. Justifiably so. It’s easy to become cynical when doomsday prophecies fail to materialize, janky software updates are branded as revolutionary breakthroughs, and your toaster is boasting of its ‘AI-capabilities’.

This cacophony of nonsense has created a din of confusion around AI’s current utility. Even those casually acquainted with a Large Language Model (LLM) like ChatGPT are aware that some kind of technological Rubicon has been crossed. Yet those same users, particularly early on, quickly encountered limitations and often mistook what AI couldn’t yet do as evidence that it could not do much at all.

But the systems behind LLM’s have been relentlessly improving for years and that same trajectory is now occurring in a separate domain of AI focused on spatial understanding. Refining computer vision so that software interprets what it sees through a camera lens in the same way you do with your eyes. Down that road awaits the real fun stuff. Robots. Androids. The Terminator.

Sincerest apologies, but this feature isn’t going there. Its aims are humbler: cut through bullspit marketing by clarifying for the non-technical reader how LLM’s work, their current practical applications, and how they will soon begin to interact with spatial AI-systems that allow software to understand the physical world.

First, a basic primer. All AI is built on neural networks. Think of them as computer programs designed to detect patterns by iterating through data and learning those patterns via trial and error. Say you want one to identify blueberries in images. You’d feed it millions of pics, each tagged “blueberry” or “not blueberry.”

The program converts each image into millions of numbers, creating a mathematical fingerprint. Then it makes a guess based on those numbers. Right or wrong, it then slightly adjusts the digital whorls that led to its response before moving onto the next pic. At first, it’s garbage but after millions of attempts, it becomes startlingly accurate. Don’t fret much over the how, what’s more important for our purposes are these takeaways.

One: this is the foundation of all AI applications today and why data centers are popping up everywhere. It takes an ocean of information to train these things. Two: at no point is the neural network aware that it is looking at blueberries, or what a blueberry even is. A caterpillar is more sentient. Three: the same network can be trained to identify many things at once. You could simultaneously train it on peaches, strawberries, raspberries, and plums. That’s how they scale in capability. Four: you are not limited to images. Neural networks can fingerprint dense operational data just as effectively. Schedules, costs, quantities, spreadsheets, sensor readings, numbers, text and time logs are all fair game. That’s how they became commercially viable.

Tech advancements enabled this in the early 2010s, and neural networks quickly became integral components of corporate infrastructure across big tech and finance. Fairly quickly, those involved in their development and implementation realized if you could train neural networks on almost anything, then pushing shoes on Facebook feeds was a parlor trick compared to where this could go.

If the internet reshaped society, artificial intelligence could remake it. But that required neural networks to grow beyond hawking dinnerware on Amazon and identifying blueberries in jpegs. They needed to interface with reality. To take input from the real world and output useful information for humans to use.

Research and development increasingly focused on two dominant paths: vision and language. The latter was easier. Words come pre-structured. Grammar, syntax, and repetition is baked into trillions of sentences providing a near infinite level of data to iterate through.

It took a little over a decade to achieve breakthrough success, which occurred in 2023, when OpenAI released ChatGPT. Within weeks, millions were treating it as an omnipotent digital sidekick, a chatbot whose communication abilities exceeded most humans and was trained on the near sum total of earthly knowledge.

This is when the bulls began spitting. Within months, Google, Facebook, Anthropic, Microsoft, and X released LLM’s of their own. Social media was flooded with jargon-soaked viral marketing blitzes designed to draw in users by arguing over which was better. A wave of “AI-powered” apps instantly followed, most little more than thin wrappers around an existing LLM, just repackaged and renamed.

It’s also when the fear began. A chorus of doomsday prophecies accompanied ChatGPT’s launch that has only grown louder. There is some truth in this. It’s crystal-clear AI unmoored a centuries old connection between expertise, salary, and employment. Some jobs suddenly seem superfluous. It will change how the world works.

But overcoming all this is actually quite simple. Ignore it. All of it. For the average user, the LLM’s are all pretty much one and the same, and if you spend enough time using one, you’ll be able to spot the snake-oil apps from a mile away. Also, major tech advances have always been accompanied by Armageddon fears. Fretting over that is like worrying about a meteor strike. It could happen but what can you do.

Additionally, anxiety is dulled by regular use. LLM’s have concrete limitations that become apparent to anyone engaging with them. While initially passing as digital gods, remember our caterpillar. Neural networks don’t actually know anything and that’s what LLM’s like ChatGPT are: statistical systems trained to predict the next word based on patterns.

This is why they hallucinate and struggle with logic issues a child can grasp, even though their predictions are usually so good that you cannot shake the feeling of hyper-intelligence. But that’s an illusion. A spell. And it’s broken when you ask one how many r’s are in blueberries or what type of snake you should buy your 13-month-old daughter.

None of that matters for individuals because they are fun to talk to and incredible skill enhancers. For businesses, however, it’s a real problem. LLM’s cannot reliably reason over complex, multi-step systems. On their own they cannot be trusted with money and reputation on the line. So, how to incorporate them?

These limitations largely apply to judgement, not execution. If told to do a specific narrowly defined task, LLM’s become incredible assistants. They can write a clean e-mail, accurately summarize long articles, rephrase technical language into plain English, generate outlines, talking points, or first drafts for projects that would otherwise start from a blank page.

These alone carve off hours of weekly workload, but they are also the LLM’s most basic applications. Far more powerful (and underutilized) is the LLM’s ability to code and be trained. Trained AIs are called agents, which are simply an LLM like ChatGPT or Claude that has been piped into an application via an API and given the ability to act inside that application based on predefined instructions.

This sounds mundane, but it’s the key to unlocking the judgement gate. That’s because agents’ tasks can be stacked for massive productivity gains. For instance, an agent can be programmed to examine incoming emails looking for invoices to save into the correct project folder. It has no ability to invent information or deviate from this task, which also includes an instruction to notify another agent when an invoice is received. That agent’s only job is to enter the invoice amount and due date into a payment list and then notify a third agent, whose sole purpose in life is to notify the project manager that a new invoice is ready.

Together, the three have completed a mundane task that takes up precious cognitive labor. Now pull the camera back: a business can deploy thousands of agents, each responsible for a single small task, quietly handling the tedious work that keeps every operation moving.

Agents can be implemented in a myriad of ways, the most straightforward is by utilizing a workforce automation platform like Zapier or Make.

“These platforms allow people who don’t know how to code to safely connect the tools and software apps they already have so they can complete step-by-step, fully automated workflows,” said Zach Giglio, CEO of GCM, an AI consulting firm.

Giglio notes that most businesses operate across multiple systems. Workforce automation platforms enable those systems to talk to one another, and their pre-built AI agents allow businesses to take that connectivity further.

“A general contractor might receive a request for a proposal through a dozen different channels. Something like Zapier can take those incoming requests and using AI agents, automatically generate structured emails to subcontractors with the relevant scope attached, clearly outlining what’s needed, what the deadline is, and what they’re being asked to bid on. At the same time, it can create calendar reminders for when bids are due and update internal tracking systems, so nothing slips through the cracks.”

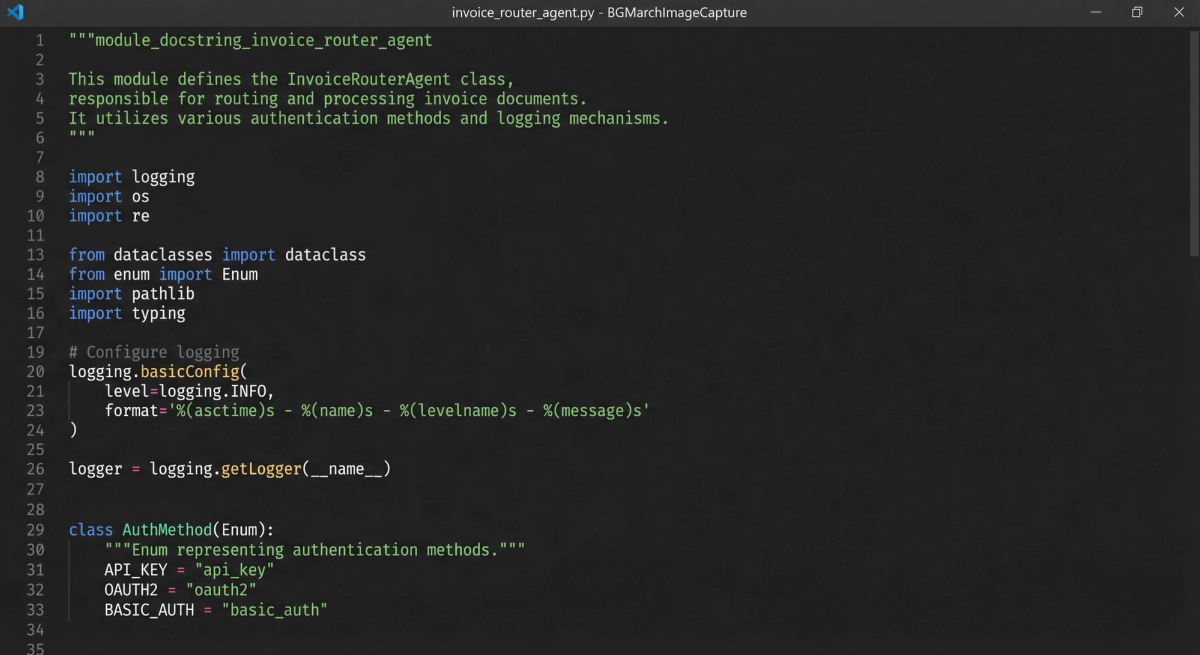

Agents can also be piped into internal development environments or code repositories and write code. Scores of businesses have ideas for simple internal tools but translating them into working software solutions has historically been prohibitively expensive or time consuming. (Agent Image)

That’s done. LLM’s were trained on every major programming language and have obliterated the barrier to entry for software creation. There is a learning curve to this method, but it isn’t too sharp. Once mastered, you can simply tell the AI what you want to build, and it will do it. A marketing intern can prototype a web scraper for leads. The admin assistant can build automated recurring financial reports. An editor of a construction trade magazine can deploy a complex CRM system for organizing sponsorships and billing cycles.

The kids call this vibe-coding. Thus far, it’s the most professionally transformative thing about LLM’s. But beware, there are deep pitfalls on this path and walking it blindly can end in disaster.

“The biggest risk is security,” says Colin Wiehagen, a managing consultant at 4C Technologies. This Pittsburgh based software developer builds systems for numerous businesses across the region, including in construction industry.

“You absolutely have to understand what secure practices are and how it aligns with your internal InfoSec policies. What kind of risks exist, not just from a coding standpoint but from a data standpoint. And if you don’t know what’s under the hood, you don’t know any of that.

If the cost of failure is low, life is good. If you’re experimenting with spreadsheets or internal tools and something breaks, you throw it away and move on with your day. Where it becomes a real problem is when people take that same mindset and apply it to systems that run operations, handle sensitive data, or impact customers directly.”

Legitimate security and implementation concerns are restraining this LLM driven back-office revolution, but the capability exists. And it will go further as neural networks become better trained to understand the physical world, converting the jobsite itself into data that the office can query, analyze, and act on.

This is a far more daunting task than learning language. Aspects of vision that humans take for granted, like depth, movement, and object permanence, are not and have never been labeled or broken down in images. Researchers had to manufacture then organize that data on a colossal scale, making neural network training significantly more costly and time consuming.

Consequentially, spatially aware AI lags behind LLM’s and has not yet had its ChatGPT breakthrough. But major venture capital firms are putting hundreds of millions into advancing this research for more practical use, including in construction. These models are beginning to act on the jobsite, with particular advancements in reality capture software.

For decades, construction teams relied on photographs to document jobsite progress, creating useful visual records but only as isolated snapshots with no reliable way to anchor them digitally. Laser scanners and later photogrammetry introduced the ability to generate precise 3D models, but both required deliberate, manual capture, with crews returning to the same locations to recreate viewpoints over time. Even then, maintaining consistency was difficult and time-consuming, because accurately positioning images inside a building has always been the central challenge, and GPS does not function reliably indoors.

“There have been a ton of approaches to get indoor positioning,” says Michael Fleischman, CEO of OpenSpace. His company is breaking new ground in the field of spatial AI, teaching their neural network off digital imagery from 80,000 projects representing more than 50 billion square feet of spatial data.

“But most of those have high hardware requirements. GPS really struggles in doors. You put these Bluetooth beacons in various specific points on your jobsite and for construction, that can be really challenging. Walls go up, they come down, things get damaged. What AI enables is a hardware free solution. Now, all you do is walk the site with a hardhat mounted 360 camera.”

The breakthrough isn’t the camera. It’s the neural network. By training on its enormous corpus of spatial data, the system learns to recognize where it is inside a building and anchor each image accordingly—fingerprinting the jobsite itself.

It works like this: a construction super walks the site with that 360 mounted hardhat and the spatial AI system determines where each image was taken by comparing what the camera sees to the project’s floor plans and known geometry. Every image is anchored to a precise location and timestamp, turning thousands of disconnected photos into a structured visual map of the building as it actually exists.

This spatial grounding transforms jobsite imagery from passive documentation into an operational record. Instead of relying on memory, incomplete notes, or scattered phone photos, teams can see exactly what existed in any location at any moment in time. Project managers can verify whether work was completed before it was covered, resolve disputes over responsibility, track installation progress, and identify issues earlier. Jobsite photos taken on cell phones get autoplaced in the appropriate location, as do voice memos and field notes.

“You can kind of think of this as an early stage spatially aware AI agent,” says Fleischman.

“The construction industry benefits so much from what technology can bring to it in terms of efficiency improvements. And with the cost of constructing just continuing to rise over the last few decades, there’s a lot of potential to make an impact.”

Once spatial AI is no longer in its toddler era, reality capture will evolve into something else entirely, an operating system with a perfect and queryable record of every site’s progress that is connected to project management and enterprise resource planning systems. This means you will be able to ask things like “show me all the ductwork level three near Grid D/E”, “confirm whether backing was installed on wall four before drywall went up”, “show me every room where insulation hasn’t been installed yet”, and get results with visuals.

Now for some speculative fun. Let’s connect the dots back to the frontend revolution. Imagine an LLM as the operating system for your business, trained on and able to act through everything that exists in your company’s data. All financial records, staffing information, blueprints, BIM’s, reality capture systems, contacts, contracts, enterprise platforms, et cetera.

It understands them more completely than any one person at the company could and can communicate with and act through all of them via an army of agents, each trained on completing single small tasks.

Once this happens, operations will streamline in ways difficult to conceive. For instance: your super walks a site with AI equipped glasses, whose built-in spatial capture agent flags a crack on wall four of the second floor and tags its exact location along with imagery. Then it passes this to a specification agent which cross references the wall and determines it is a structural shear, so it escalates the issue to a risk-classification agent, which identifies it as requiring engineer review, and hands it off to an enterprise agent, which assigns ownership to the contractor of record after generating a structured punch list prefilled with location, spec references, and support imagery. Then a coordinating agent takes over and notifies the project manager and superintendent, highlighting downstream impacts on inspects and follow-on trades before handing off to a verification agent which schedules a spatial re-scan to confirm resolution. All of this occurs before the site walk is concluded.

Artificial intelligence is neither apocalypse nor gimmick. Cut out the noise and confusion and LLM’s are infrastructure. Already, they are accelerating communication, collapsing the cost of software creation, and capable of deploying agents that handle cognitive labor once done by entire teams. As spatial AI reaches the jobsite, construction itself is becoming fully visible and queryable for the first time.

The implications of that are difficult to overstate. The noise surrounding AI isn’t going away. If anything, it will get louder. New products. New promises. New fears. Some of it justified but much of it not. Beneath all of it though, something very real is taking shape. Neural networks are fingerprinting the world. The companies and workers who understand how to harness that won’t be replaced; they’ll build what comes next.